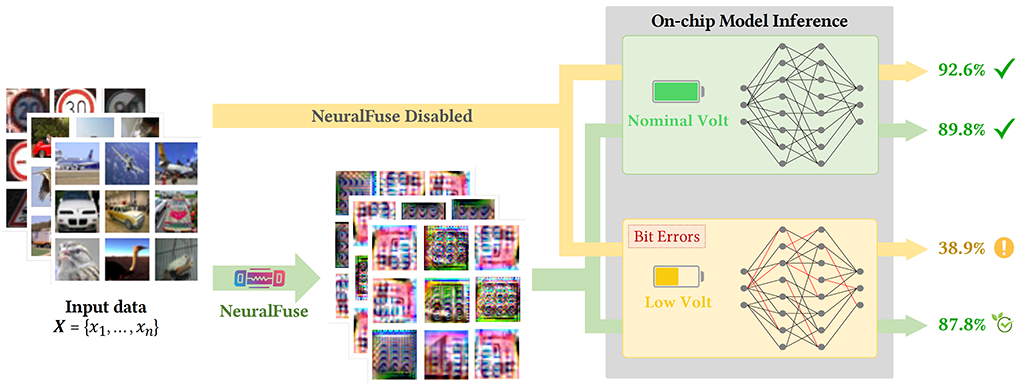

Deep neural networks (DNNs) have become ubiquitous in machine learning, but their energy consumption remains problematically high. An effective strategy for reducing such consumption is supply-voltage reduction, but if done too aggressively, it can lead to accuracy degradation. This is due to random bit-flips in static random access memory (SRAM), where model parameters are stored.

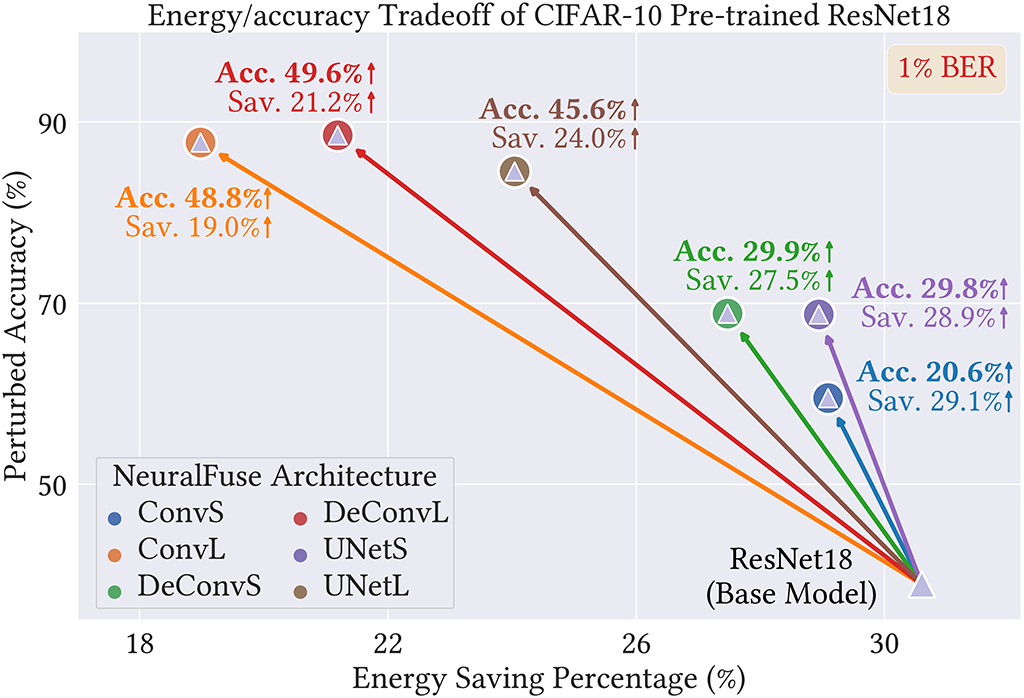

To address this challenge, we have developed NeuralFuse, a novel add-on module that handles the energy-accuracy tradeoff in low-voltage regimes by learning input transformations and using them to generate error-resistant data representations, thereby protecting DNN accuracy in both nominal and low-voltage scenarios. As well as being easy to implement, NeuralFuse can be readily applied to DNNs with limited access, such cloud-based APIs that are accessed remotely or non-configurable hardware. Our experimental results demonstrate that, at a 1% bit-error rate, NeuralFuse can reduce SRAM access energy by up to 24% while recovering accuracy by up to 57%. To the best of our knowledge, this is the first approach to addressing low-voltage-induced bit errors that requires no model retraining.

Boosts DNN Accuracy Under Low Power NeuralFuse improves the accuracy of deep neural networks (DNNs) operating in low-power environments with random bit errors, without needing to retrain the models.

Protects DNN Accuracy Under Unstable Power NeuralFuse improves the accuracy of deep neural networks (DNNs) operating in low-power environments with random bit errors, without needing to retrain the models.

Adapts to Limited-Access Settings NeuralFuse supports deployment in scenarios with limited access to model details, using flexible training methods to adapt effectively across diverse DNN architectures.

Reduces Energy Use with Proven Performance NeuralFuse recovers up to 57% of lost accuracy and reduces memory access energy by up to 24%, tested across diverse models (ResNet18, ResNet50, VGG11, VGG16, and VGG19) and datasets (CIFAR-10, CIFAR-100, GTSRB, and ImageNet-10).

On the same base model (ResNet18), we illustrate the energy/accuracy tradeoff of six NeuralFuse implementations. The x-axis represents the percentage reduction in dynamic-memory access energy at low-voltage settings (base model protected by NeuralFuse), as compared to the bit-error-free (nominal) voltage. The y-axis represents the perturbed accuracy (evaluated at low voltage) with a 1% bit-error rate.

@inproceedings{sun2024neuralfuse,

title={{NeuralFuse: Learning to Recover the Accuracy of Access-Limited Neural Network Inference in Low-Voltage Regimes}},

author={Hao-Lun Sun and Lei Hsiung and Nandhini Chandramoorthy and Pin-Yu Chen and Tsung-Yi Ho},

booktitle = {Advances in Neural Information Processing Systems},

publisher = {Curran Associates, Inc.},

volume = {37},

year = {2024}

}